To continue with part 3 of this series, I will quickly recap where we left off.

- We have a source VNX and a target XtremIO that we need to migrate boot LUNs and VMware RDMs to

- SANcopy enabler installed on source VNX

- VNX SP ports are zoned to XtremIO target brick (in my case, I am using brick 4 of a 4-brick cluster)

- We have identified our source LUNs and created our target XtremIO volumes

For our BOOT from SAN LUNs, we do not need these to be incremental SANcopy sessions as they are only 30GB in size each. To cutover the boot LUN, we will do the following:

- Place the host maintenance mode to evacuate any/all VMs on the host

- Shutdown the host

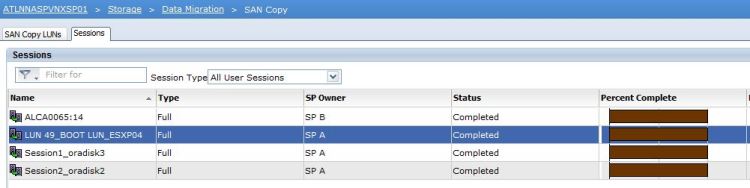

- Start the SANcopy session

- Once complete, remove the source volume from the VNX host storage group

- Map the boot volume to the VMware host as HLU 0 on the XtremIO

- Adjust host boot from SAN policy (in my case, I am working with UCS hosts)

- Boot host and verify successful boot

- Exit maintenance mode

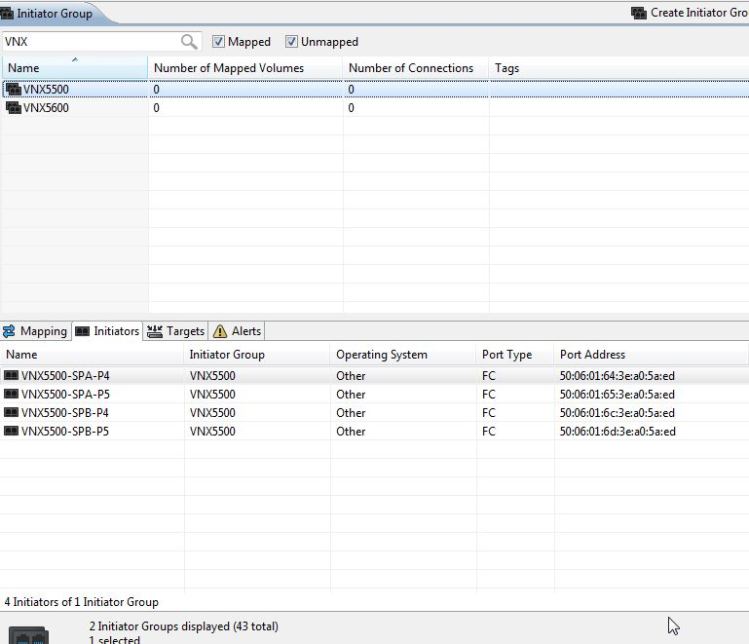

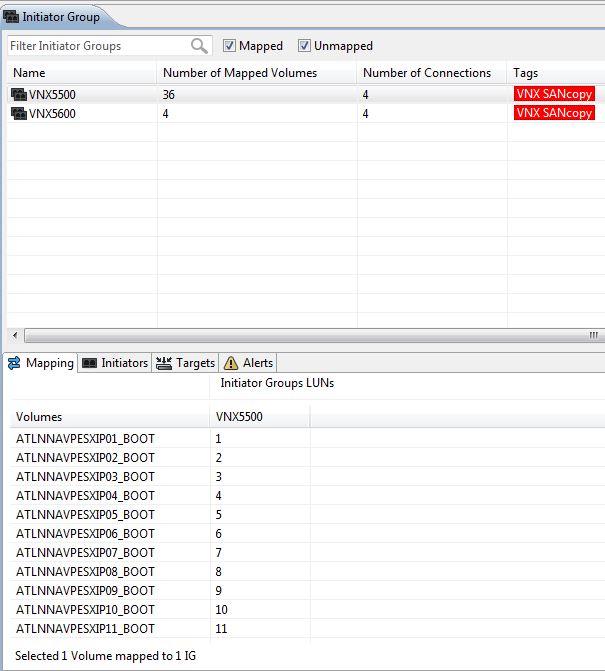

The SANcopy session can be created in either the VNX GUI or CLI using naviseccli. You will need to know the following: source LUN name (or ID), source LUN GUID (wwn), target XtremIO FC ports, target volume mapped HLU # to the VNX initiator group.

As you see here, I mapped host 1’s target boot volume as HLU 1 to the VNX initiator group. (Host 2 mapped as HLU 2, etc.) I decided to create my SANcopy sessions in the CLI as I have about 40 host boot LUN sessions to create.

naviseccli -h 10.10.10.10 -User sysadmin -Password sysadmin -Scope 0 sancopy -create -name “LUN 47_BOOT LUN_ESXP10” -srcwwn 60:06:01:60:BB:B4:30:00:61:28:DD:1D:82:F0:E1:11 -fibre -destportwwn 51:4f:0c:50:62:07:af:30 10 -throttle 8 -verify -o

This syntax breaks down like this:

naviseccli -h (SP IP) -User <username> -Password <password> -Scope 0 sancopy -create <session name> -srcwwn <source LUN GUID / WWN> -fibre -destportwwn <XtremIO target FC WWN> <destination LUN number (HLU)> -throttle <0 – 10> -verify -o

While scripting out my session create commands, I am alternating between SCs and ports on the XtremIO to balance the load out.

As for our incremental sessions, we need to create a Reserved LUN Pool that the incremental sessions will use. For that we will follow EMC best practices and create our incremental sessions accordingly. More to come on that soon!